What Does It Mean to Be AI Native?

July 14, 2025

What Does It Mean to Be AI Native?

Do you ever wonder how TikTok always seems to know what you want to watch—even before you do? Or how your email app drafts a reply that sounds uncannily like something you’d say? It’s easy to assume these are just AI-powered features—clever additions to otherwise traditional software.

But what if that’s not the full story?

What if these systems weren’t just using AI—but built around it? Welcome to the emerging world of AI Native products.

Understanding AI Native

The term “AI Native” may still feel unfamiliar. It hasn’t yet made its way into every tech blog or conference keynote. But it’s quietly becoming one of the most important shifts in how digital systems are built—and how they behave.

So, what is it?

AI Native systems are those that are built from the ground up with artificial intelligence at their core—not as a feature, but as the foundation. These products don’t simply use AI to enhance tasks—they rely on AI to function, adapt, and improve continuously. Remove the AI, and the product loses its identity.

Just as cloud-native applications were built to thrive in distributed, scalable cloud environments, AI-native products are purpose-built to learn, predict, and evolve in real time.

Where Did the Concept Come From?

While the term “AI Native” is relatively new, the thinking behind it isn’t. With large-scale AI infrastructure now accessible, it’s finally viable to build systems that are intelligent by design—not just enhancement. Real-time inference, model feedback loops, and edge AI capabilities have made it possible to build self-learning systems across industries.

From network infrastructure to enterprise software and consumer apps, teams are moving away from feature-based intelligence toward holistic architectures that behave more like adaptive systems than static tools.

How AI Native Is Being Applied Today

The defining trait of an AI-native system is that intelligence isn’t a feature—it’s the architecture. It’s not about layering a chatbot on top of a static interface. It’s about designing systems that think, adapt, and evolve—from the inside out.

Here’s how AI-native thinking is already transforming modern software and infrastructure:

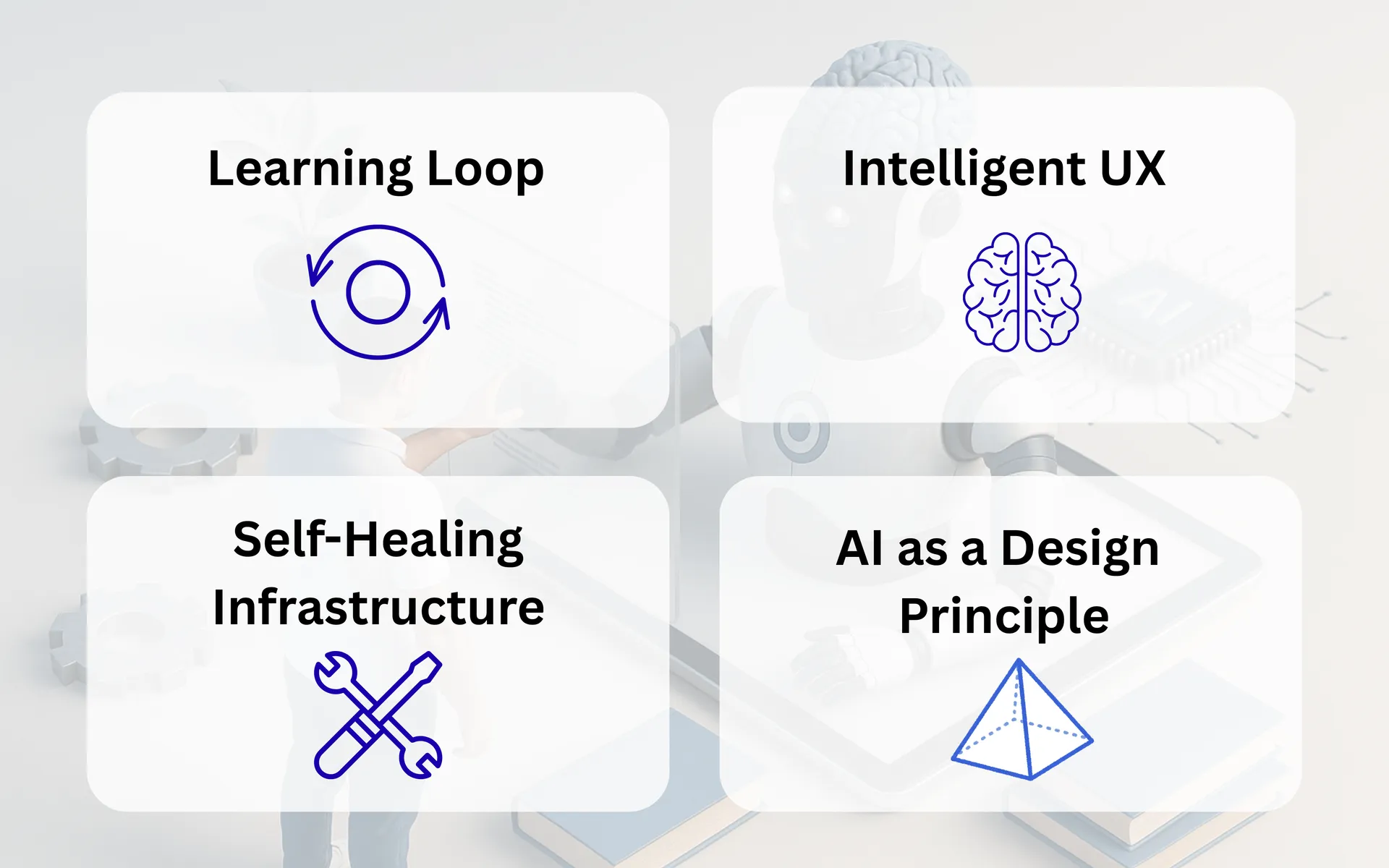

1. Continuous Learning Loops

AI-native systems don’t just automate—they adapt. Every interaction becomes part of a feedback loop that helps the system refine its responses. Think of a productivity tool that drafts emails in your style or prioritizes tasks based on your habits. The more you use it, the more aligned it becomes.

2. Intelligence That Shapes the User Experience

These products use real-time behavior to shape what users see or experience. Instead of static flows, the interface adapts to preferences and context. It’s not just about recommending content—it’s about shaping the journey itself. Your actions don’t just trigger responses; they guide system behavior.

3. Infrastructure That Thinks for Itself

AI-native systems extend intelligence to the backend. They can detect issues before they impact users, reconfigure routes, and retrain models without manual input. This goes beyond automation—it’s resilience by design. Operations teams shift from managing errors to overseeing autonomous adjustments.

4. AI as a First-Class Design Principle

In AI-native products, intelligence isn’t layered onto the stack—it defines the stack. These systems include components like vector memory, decision loops, and contextual learning as native capabilities. It’s the difference between embedding AI and architecting around it.

Rather than treat AI like an isolated layer, AI-native teams rethink the entire product model—how data flows, how feedback is captured, and how intelligence is surfaced to the user.

Beyond Startups: A Strategic Shift Across the Ecosystem

AI-native architecture is no longer just an experiment—it’s shaping how leading platforms rethink their foundations. Product roadmaps now prioritize:

- Fine-tuned models on proprietary data

- Real-time inference for dynamic user interactions

- Continuous feedback loops that evolve system logic

- Built-in governance for trust and explainability

This isn’t about chasing trends—it’s about building systems that learn as fast as their users. And that shift brings both opportunity and new responsibility.

The Benefits and Challenges of Going AI Native

Like any foundational shift in software architecture, moving toward AI Native unlocks tremendous advantages—but it also introduces a new class of complexity. It’s not a plug-and-play transformation. It’s a commitment to building systems that learn, evolve, and sometimes behave in ways you didn’t explicitly program.

Let’s unpack both sides:

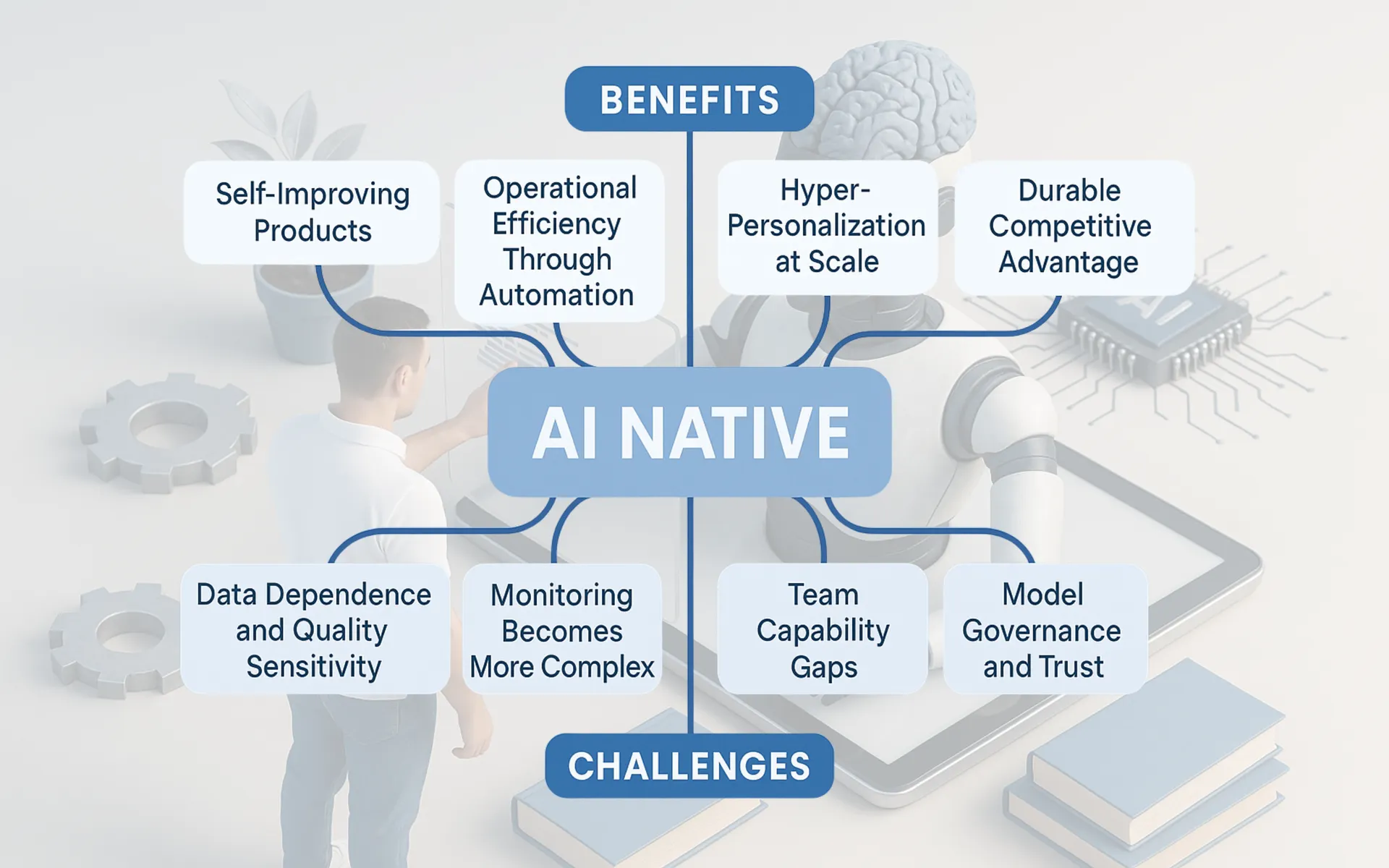

Benefits of AI Native

1. Self-Improving Products

Every user interaction becomes fuel for improvement. AI-native systems are architected to capture feedback loops, learn from them, and modify their behavior—often without human intervention. Over time, the product becomes more efficient, more helpful, and more aligned with actual user needs.

Example: An AI-native scheduling assistant can learn how you prefer to arrange meetings, reject interruptions during focus time, and even suggest better time slots based on historical acceptance rates.

2. Hyper-Personalization at Scale

Static rules can personalize experiences to an extent—but AI-native systems can respond to subtle patterns in real-time behavior. They don’t just segment users—they tailor interactions down to the individual level, learning from preferences, tone, pacing, context, and intent.

This means fewer generic experiences. Interfaces become anticipatory, not reactive.

3. Operational Efficiency Through Automation

AI-native infrastructure is often designed to monitor, repair, and optimize itself. Instead of writing scripts for every potential edge case, teams can train systems to detect anomalies and take corrective actions automatically. This reduces downtime, simplifies maintenance, and frees up engineers to focus on high-leverage problems.

Think self-healing pipelines, autonomous feature tuning, and proactive issue detection.

4. Durable Competitive Advantage

Traditional software can be copied—features replicated, designs cloned. But learning loops create compounding differentiation. The more the system is used, the smarter and more tuned it becomes—based on proprietary user data. This makes replication not just difficult, but often strategically futile.

Products become better because they’re used—a virtuous cycle hard for competitors to match.

Challenges of AI Native

1. Data Dependence and Quality Sensitivity

AI-native systems are only as good as the data they consume. Noisy, biased, or incomplete data can degrade performance, misguide learning loops, and ultimately erode trust. Unlike traditional software, poor data doesn’t just cause bugs—it reshapes system behavior.

This requires rigorous data hygiene practices, pipeline observability, and real-time validation mechanisms.

2. Model Governance and Trust

Continuous learning is powerful—but also risky. Models can drift from intended behavior. Feedback loops can reinforce bias. In customer-facing applications, this can result in hallucinations, unfair recommendations, or opaque decisions.

Governance isn’t optional. Teams need controls for explainability, versioning, rollback, and auditability baked into their workflows.

3. Monitoring Becomes More Complex

Static code is deterministic. AI-native systems are probabilistic and adaptive. That means traditional monitoring—based on fixed thresholds or rule-based alerts—often falls short. You now need to track model performance over time, detect concept drift, and monitor output quality dynamically.

Observability tools must evolve to match the system’s learning nature, not just its uptime.

4. Team Capability Gaps

Building AI-native products requires a deeply interdisciplinary approach. Product managers must understand how feedback loops influence UX. Engineers need fluency in ML infrastructure. Data scientists must collaborate tightly with design and ops. These aren’t just technical shifts—they’re cultural ones.

The gap isn’t just skill—it’s organizational readiness.

In short: AI-native systems are powerful—but they’re also alive. They require not just smart algorithms, but ongoing care. Strategy, architecture, data, and team culture must align for the system to thrive safely.

Final Thoughts

The idea of building AI-native systems is still taking shape, but the shift is already underway. As infrastructure becomes smarter, and users expect more intuitive digital experiences, the systems that thrive will be those that can learn as fast as their users evolve.

AI Native isn’t just a technical model—it’s a reflection of how software is learning to meet us where we are, and grow with us.

So here’s a question for you:

How do you see AI-native systems shaping your world? Are you building one? Using one? Skeptical of the term?